The Chatbot Industrial Complex

Once again, I found myself drowning in charts about artificial intelligence, and so we're doing an all-AI TMO! Don't worry, I still wrote it all myself.

Welcome to this week’s edition of The Macro Obsession.

The best round-up of current events and trends in finance, tech, and the real economy currently in your inbox!

Issue #41—Week of April 13th, 2026

Artificial Inflation

Malpractice-as-a-Service

Distilled in Menlo Park

Chatbot Centrism

Hey folks!

This week, since all of tech news is talking about Claude Mythos (Vellum), my scouting for interesting charts only fed back AI charts. But don’t worry, I’ve definitely got some fascinating ones this week.

If this is more your thing than the usual varied TMO style, you’ll love the other AI edition I did back in January.

In that post, I asked readers what their preferred chatbot was.

Gemini won with 50% of the votes, while ChatGPT came in at 29%. However, Claude has made some significant moves since then (TMO #38), so I’m curious to see if it gets more than a 7% vote share this time around.

Artificial Inflation

One of the key questions I have about AI has been, “Where is the shovelware?”

If AI is so good at coding and that reduces the barriers to creating software, we should be seeing an explosion of the amount of software that exists, filled with low-effort AI-made garbage with few downloads. But it should still be there. It took a while for movement to happen on this front, longer than I expected, but now it’s really starting to take off; see “Shovelware a la Claude” (TMO #32).

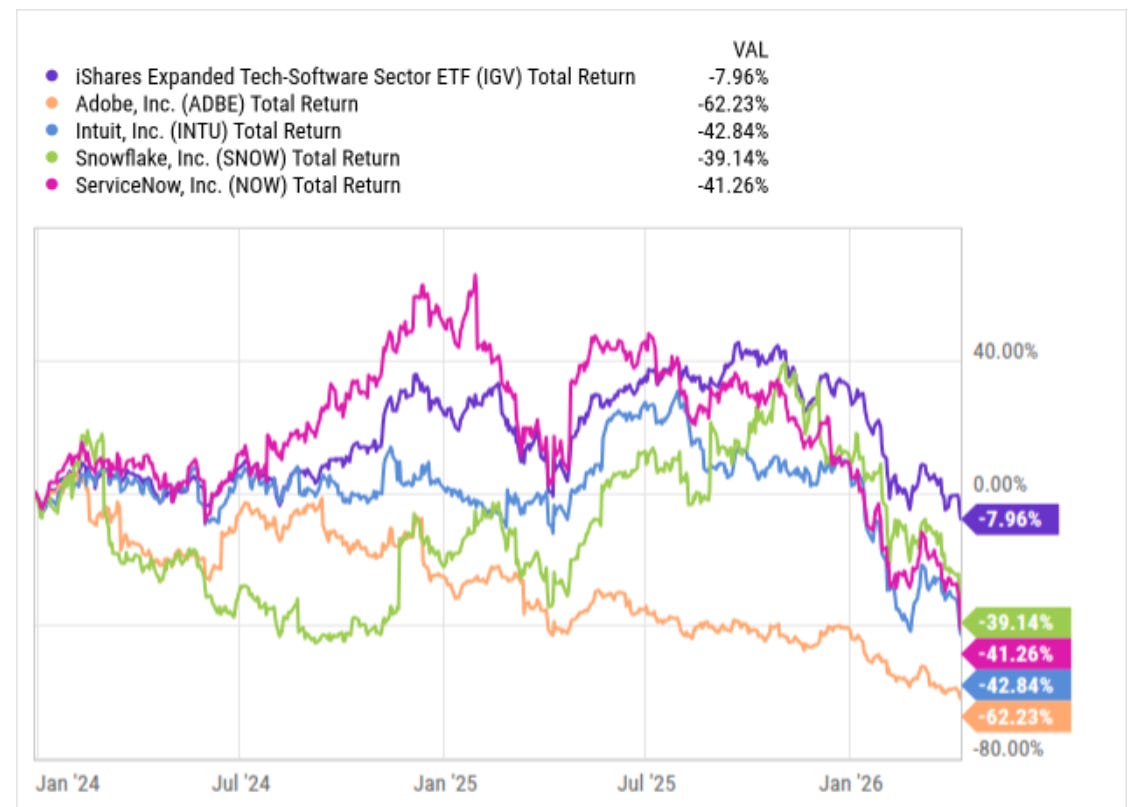

This is one of the theories behind why the software index, which you can track with the IGV 0.00%↑ ETF, has been getting slaughtered this year. It’s now down ~30% from its peak in January, only a few months later. Software firms are being priced like they’re dying rapidly, and this threat from agentic coding will put some of them out of business; Adobe ADBE 0.00%↑ is down ~65% from its peak in 2024. It’s now at levels not seen since 2018.

But there’s an issue. The kinds of effects that shovelware should be having on software that would indicate this theory is right—they’re not there.

Excessive supply of software should be driving prices down as firms like Intuit INTU 0.00%↑ and Adobe try to compete. If nothing else, the low cost of creating shovelware should at least weigh on the overall pricing power for software.

But we’re seeing the exact opposite.

The March inflation report showed software prices are up by double digits. Something isn’t right here.

I’m not trying to say that AI is actually the cause of software prices rising. It’s plausible that legacy software firms are raising prices to try to cover their margins as customers leave to make/buy shovelware instead. After all, despite these firms all losing massively on their share price, earnings are still rising. Tech earnings have literally never looked better (TMO #39).

This supports my central theory about AI chatbots, that they are largely unprofitable to run and the things they make are not very competitive with existing software solutions. There’s market fear about displacement, but the corporate earnings show only growth. The mismatch is in the market’s expectations and consensus, not that there will be some lag here. Perhaps some firms will eventually be squeezed out, but the whole sector rerating as it has is overdone.

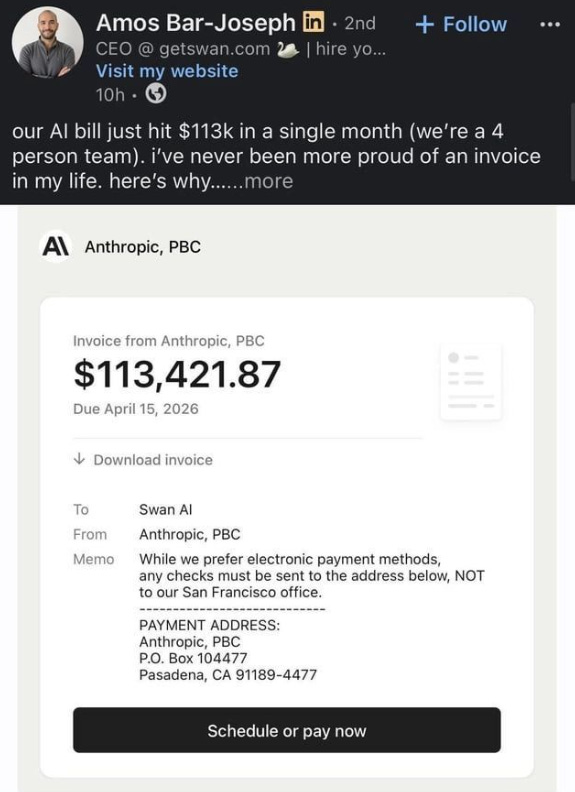

Despite this, there are firms spending more than $28,000 per employee per month on Claude tokens and bragging about it online. They’re making the bet (Swan AI blog) that they can outsource so much of the labor of running businesses to Claude that this bill is essentially just salary that doesn’t also come with benefits costs and doesn’t microwave fish in the break room once a week. I wonder if it’ll work out for them.

There’s tons of content online already about how this new Claude Mythos model will change cybersecurity forever (WIRED). But I’ve heard that kind of thing before, and I’m not fooled by Anthropic talking their own book.1

Is it better than Opus? Definitely, we can see that in the Vellum benchmarks.

But will it cause Cloudflare NET 0.00%↑ to go out of business? Likely not.

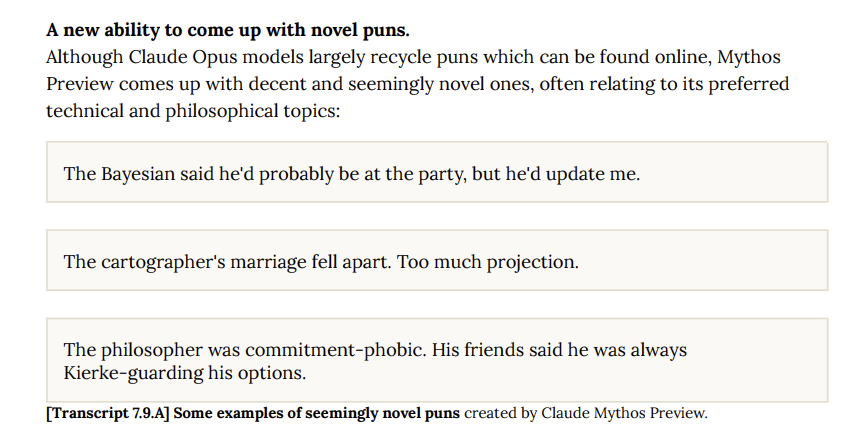

One thing that I have to give Mythos is that it has so far been able to demonstrate something that does make it unique among AI models: it can make original puns. Unlike most AI that are not funny or witty and recycle puns from the Internet, Mythos was able to make its own puns. They’re not bad!

At least if we’re going to have software prices run at triple the average inflation rate, we should be able to enjoy a good pun or three.

Malpractice-as-a-Service

This story falls in the same vein as “Venture Capital Killed the Internet” (TMO #17) where I discussed a company that is running physical phone farms so that their army of AI influencers can astroturf across social media. They’re currently mercenaries, posting for the highest bidder and flooding social platforms with generated avatars hawking junk to make it look like there’s natural momentum behind a product or idea.2

Since then, I’ve come across another morally dubious venture that aims to use AI to make society objectively worse for humans. Social media influencers were too easy of targets. Now, they’re shooting for something way harder: attorneys.

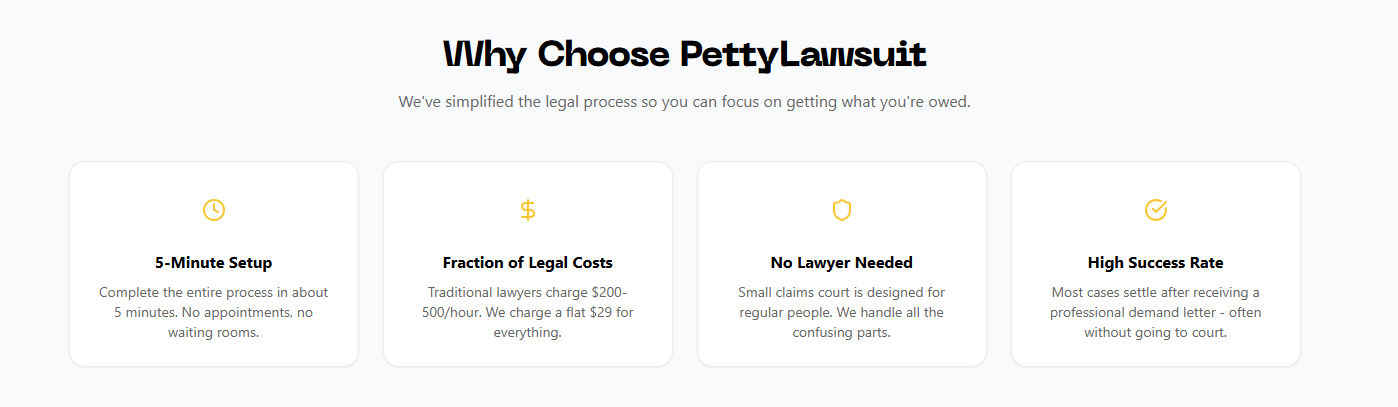

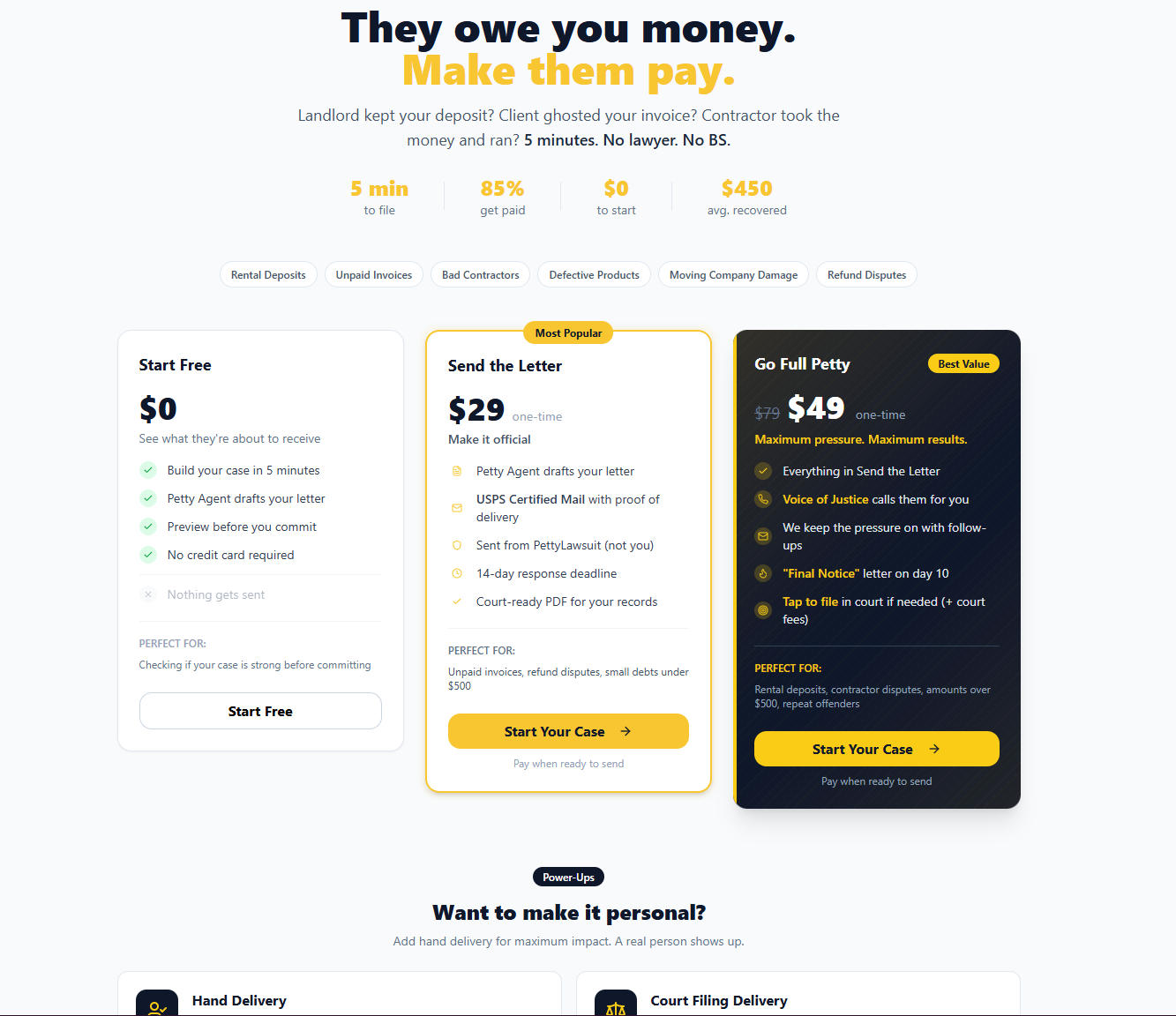

Enter PettyLawsuit.com, which promises to automate the legal process with no lawyer needed.

They promise a 5-minute setup, $30 fee to go after sub-$500 amounts. They’re not kidding about pricing. You have to pay your own court fees, filing fees, etc., but the letter itself is really $30.

You can also “go full petty” for $20 more and add on a “human in the loop” to physically deliver your notice.

And I can already hear some of my readers responding:

But Jack, isn’t democratization a good thing?

One of the reasons the little guy gets screwed so often is that he cannot pay legal fees to defend himself or go after landlords/corporations, so he just has to eat the loss since he can’t pay a lawyer $200/hr.

This changes that dynamic!

And you’d be right on that point about democratization and what it could do for people, but there are some knock-on effects that I just have a hard time getting through, namely:

This will incentivize defensive bureaucracy. It’s already bad enough with the current landscape; I expect the issue of being overburned by compliance disclosures, checklists, and waivers to get worse over time as firms look to cover themselves from the endless assault of $30 AI lawsuits.

Small operators will be asymmetrically wounded by this trend compared to large corporations and multinationals. Starting a business gets harder when one unhappy customer can use an AI system, cheaply, to harass you and your business. Frivolous lawsuits that otherwise may have stopped at the lawyer discussion will get through more and burden the small businesses that cannot afford legal defense and will settle more often. The result: rising liability insurance costs will squeeze small businesses even further.3

I would say that the worst part is that it will further erode the already dim social trust levels we have. But the real worst part is that the AI they used to write their website copy hallucinated how many steps there are to use their service.

Distilled in Menlo Park

If you’re an avid reader of AI newsletters, you may have stumbled upon Alberto Romero’s recent article (Substack) on Claudenomics. This story is fairly relevant to ongoing narratives at TMO, so I thought I’d bring it up here for readers, but I do recommend his original article.

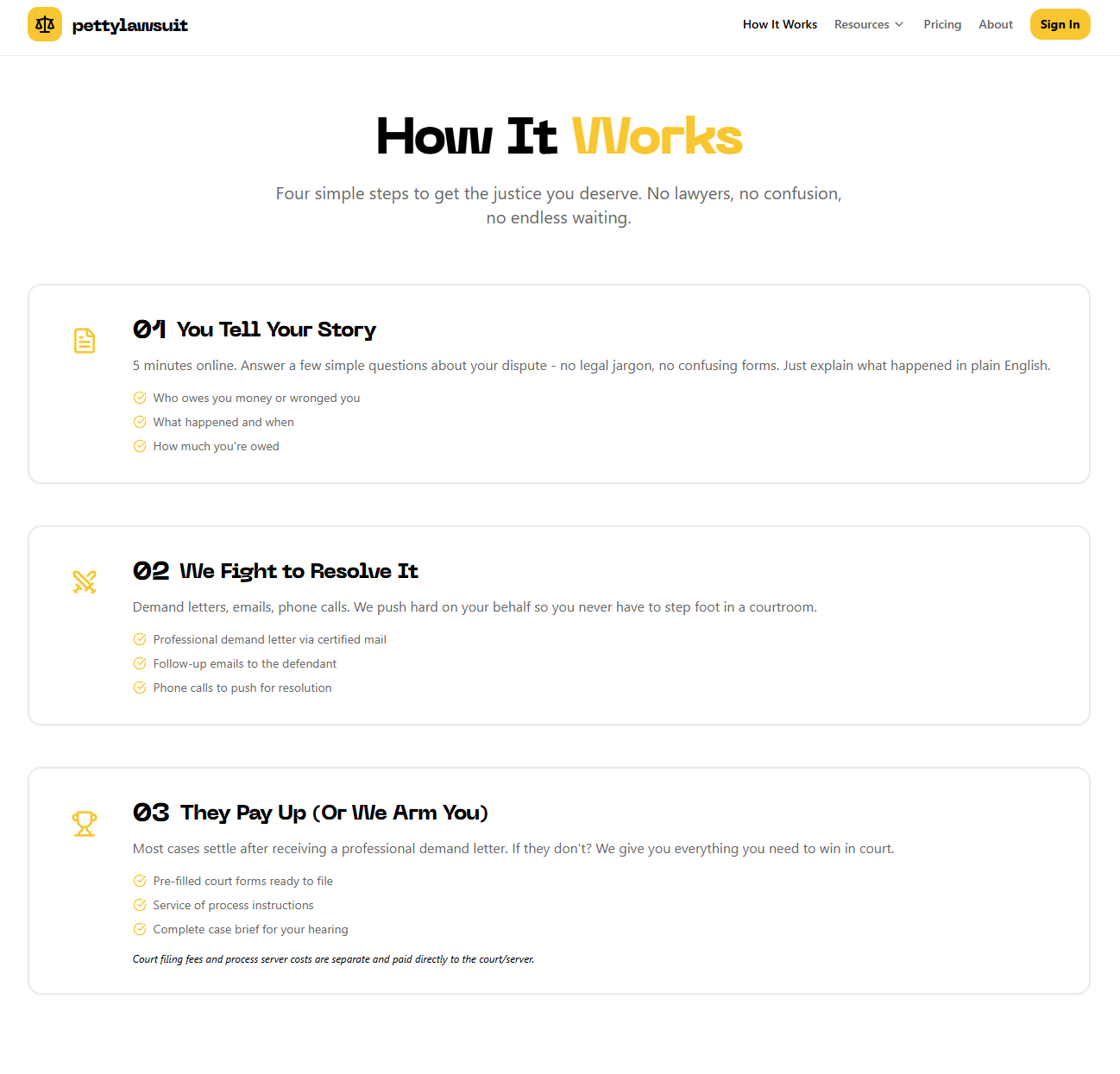

For those unaware, Claudenomics was the internal leaderboard at Meta META 0.00%↑ that tracked how many tokens employees used—and oh boy—are they burning through a lot.

In the past month alone, they used 3x as many tokens on Claude inputs and outputs as are contained in the entirety of human literature.4

You can’t look at this chart and tell me we aren’t trying to build a machine god.

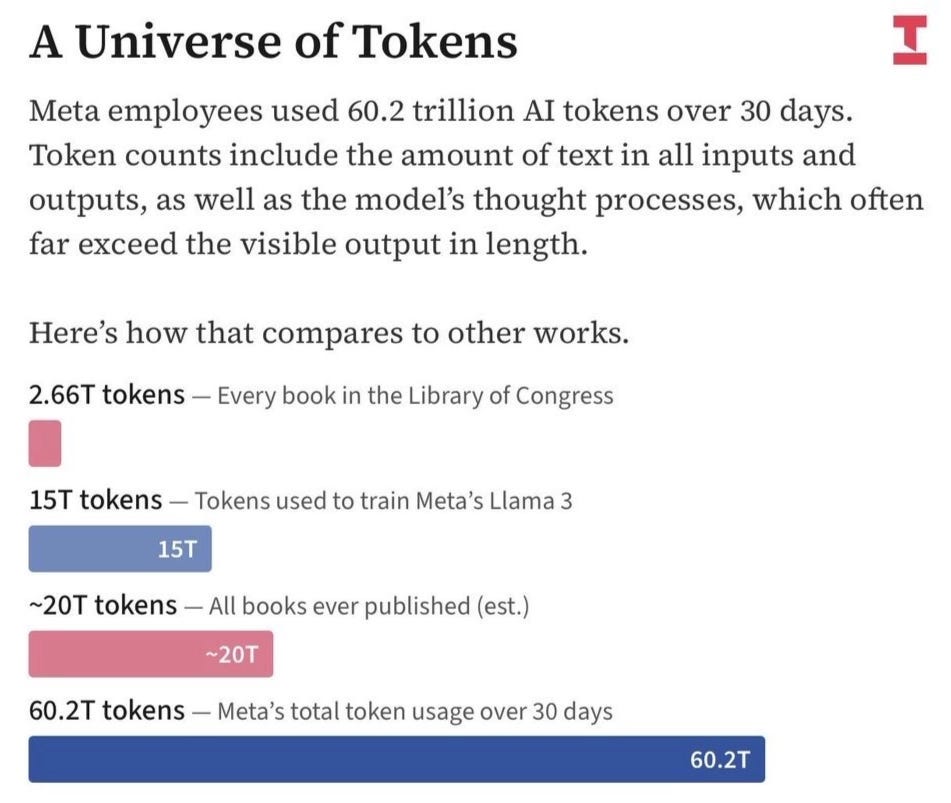

It’s been great for Anthropic, who makes Claude, because they’ve been able to charge for all of this token processing. This massive use of their systems from Meta, along with the increase in downloads of Claude following their spat with the Pentagon (TMO #38), has meant that Anthropic’s revenue hasn’t plateaued alongside OpenAI’s. But it’s clear what’s happening at the secular level, which is that user acquisition is slowing and retention is declining.

And don’t forget about the guy spending $113k/mo for his four-person team.

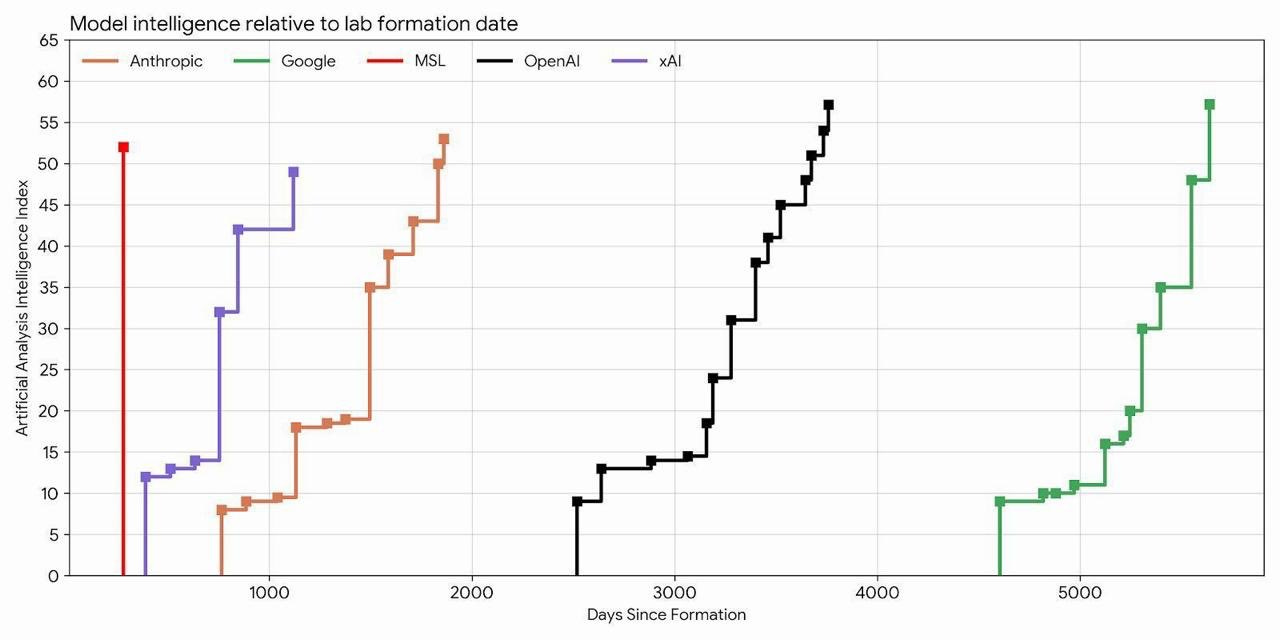

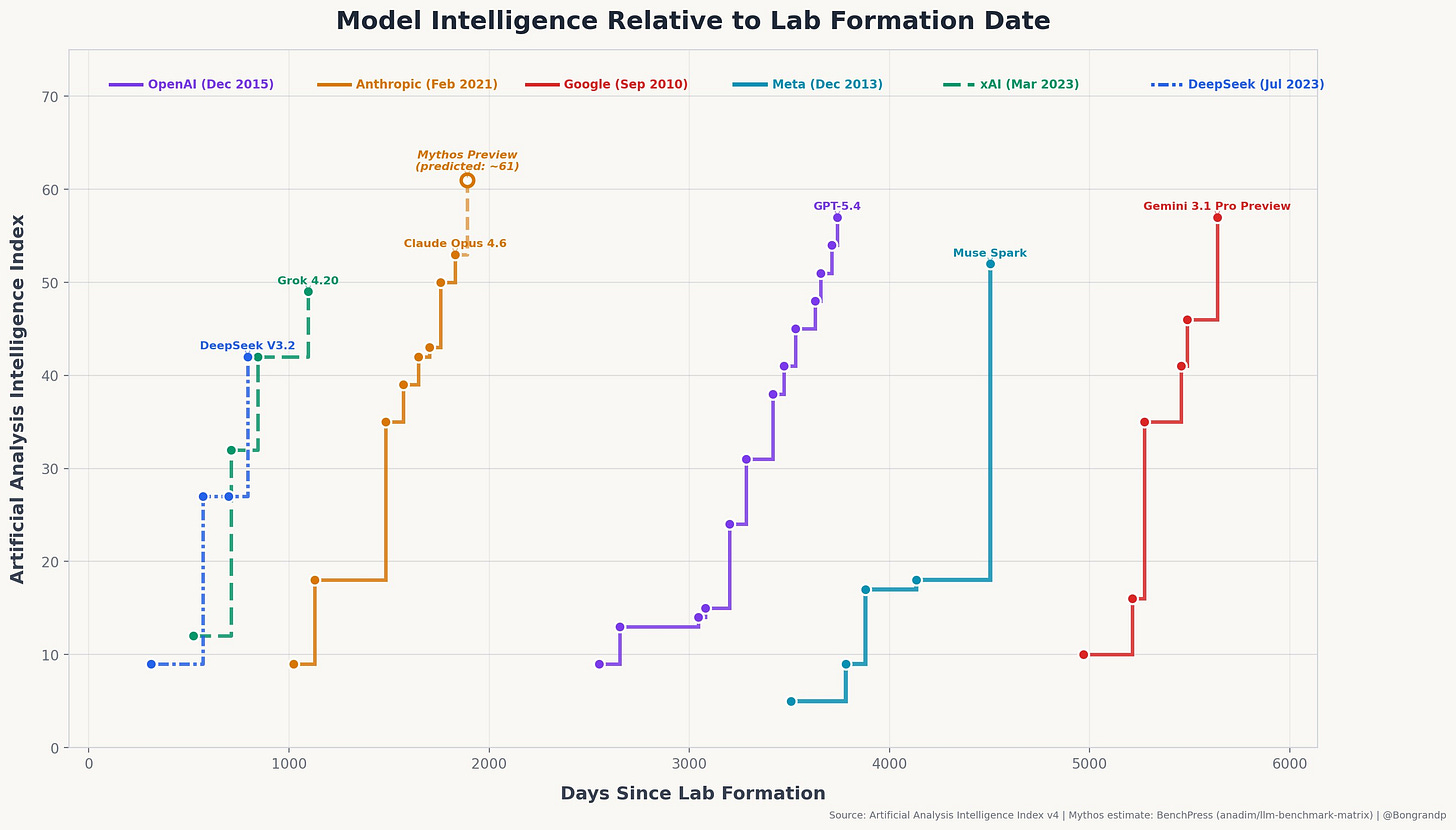

Back to Meta, where they just released the largest single jump in a frontier lab’s history in terms of intelligence against the days the lab has been in operation.

Meta’s new lab, MSL, which released their “Muse Spark” model recently, has one iteration and it’s already better than Grok (xAI), and almost as good as Claude.5

There’s the setup, and here is Romero’s conclusion that I think has real merit given what we know about the situation on the outside:6

And then, as I was thinking of a way to circle back to the beginning to finish the article, I read that word again: Claudeonomics.

Wait…

Did Meta just spend 60 trillion tokens last month on Anthropic models to distill from Claude’s reasoning traces the data they needed to bootstrap this new release?

If that’s true, that’s pretty intense.

Here’s how they could´ve achieved it: Meta spins up a bunch of accounts (not fake, as were those from the Chinese labs) and then uses those accounts to bombard a stronger model (Claude) with carefully crafted prompts designed to extract its reasoning patterns. Then they collect the outputs. Then you use those outputs as training data for their own model. The “student” learns to mimic the “teacher’s” behavior without having to figure it out from scratch. It’s dramatically cheaper and faster than developing capabilities independently.

…

Anthropic’s ToS prohibit using outputs to train competing models, but enforcement against Meta—a massive legitimate customer—is a very different proposition than catching proxy networks from China.

…

But even if illegality is off the table, if this is true, it’s a stain on Meta’s efforts to catch up with the top labs. Whereas Anthropic and OpenAI have been working hard to achieve a much better pre-trained model onto which to build the next generation of agents, Meta is out there, with more compute than it can use, struggling to milk Claude, only to fail to beat it on the most important benchmarks.

The answer to why Anthropic would allow this is straightforward: they are going to lose their politics bump soon, and their fate will be the one that OpenAI now faces. Running a frontier lab is just not a profitable endeavor and is one that will eventually only be taken up by firms that can otherwise make enough revenue elsewhere to support these money-pit operations.

Subsidizing the endless creation of media by the public will go down as one of the greatest acts of willful capital destruction in history. OpenAI and Anthropic will eventually be bought out or sold for parts and are worth more dead than alive.

I am sure of that, and I’m not sure of much these days.

More Below, But ICYMI

Chatbot Centrism

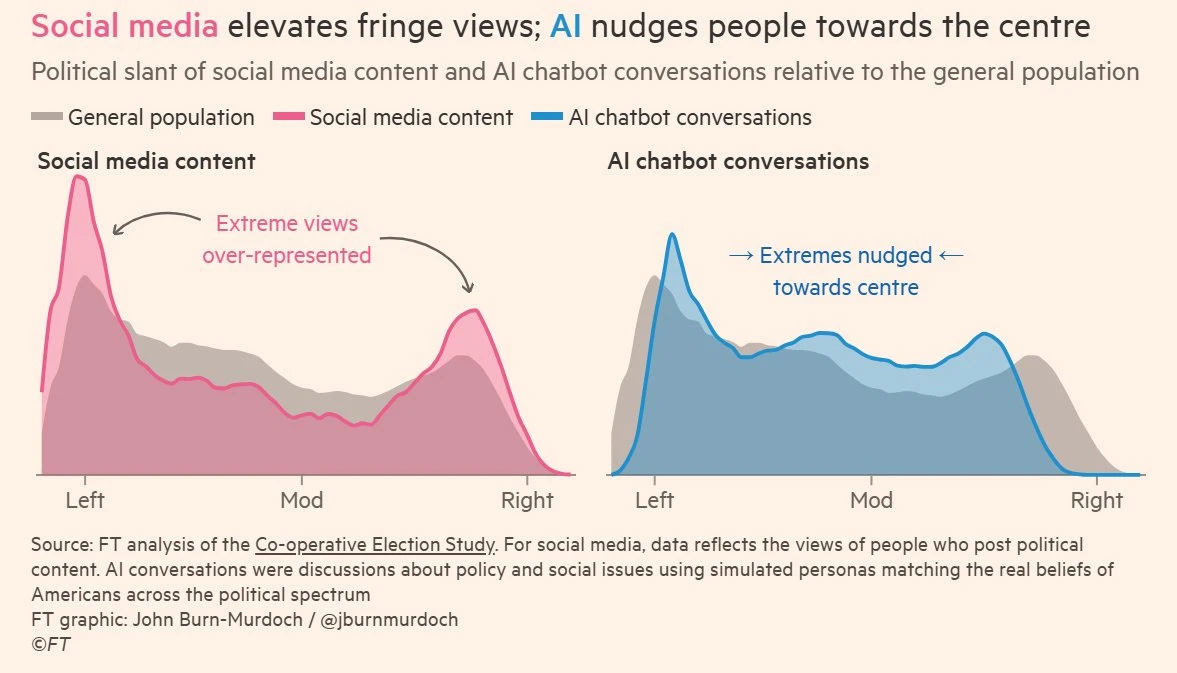

The last story this week involves a little politics. We know that social media overrepresents extremism, largely because engagement-driven algorithms prioritize it. Posts that trigger deep emotional responses get more likes, comments, shares, etc. That makes the algorithm put posts in front of more people who are immediately protagonistic or antagonistic to them, which causes more likes, comments, shares, etc.

It is a truly vicious cycle.

It turns out that steering people to AI chatbots does the opposite. The chatbots are taught to hedge their language, which drags necessary nuance into the picture. Nuance changes how one feels about a topic. In fact, it steers them toward the center of even normal political discourse.

This tracks with the existing anecdotal evidence I have from when I was a teacher that the best way to rid somebody of bigoted beliefs was to introduce them to an average person from that group. It’s not an immediate cure, but you wouldn’t believe the amount of people who hold bigoted beliefs about a group and have never properly interacted with a member of that group—this includes ethnicity, nationality, political affiliation, religion, etc.

AI offers these perspectives immediately in a non-threatening and non-judgmental way, as it’s trained to do, and that exposure offered in the sterile way that chatbots do pushes people away from extreme positions which are usually tribalistic in nature.7

Exposure outside the tribe can be threatening, but not when it looks like ChatGPT.

It will be curious to see this data when the set is larger and the accounts used in the chatbot conversations aren’t simulated. That’s a major issue with this report.

The conversations were based on real accounts and their public beliefs, posts, comments, responses to other humans, etc., but it wasn’t those exact users having conversations. That kind of simulating adds assumptions that make the whole thing messy.

Maybe we’re just seeing that when chatbots talk to chatbots, they always trend toward the middle? I would also accept that conclusion here.

You have ChatGPT and Gemini talk and no matter how heinously left or right their persona is, their simulated views always end up coming out milquetoast?

Color me surprised.

If this newsletter made you think of a pal, send it to them and tell them why.

See you next week.

I do believe them to some degree that they think their model could be a threat. That’s why they built the Project Glasswing consortium. The question is if they are too close to the thing to tell what’s vapor or not. I expect little to come from this project, but I’m always willing to be wrong.

VC is undoubtedly at fault for this. This firm, DoubleSpeed AI, is backed by a16z. I expect this to be the tip of the iceberg, since this is the one we know about and it’s morally dubious enough. Who knows how industrial the troll farms have become?

Although I suspect that the 50 Cent Army (Wikipedia) is still much cheaper than AI.

The financial advisor E&O insurance I carry went up ~18% this year, so at least anecdotally, this is already taking place.

Nobody has anything on Google GOOG 0.00%↑/GOOGL 0.00%↑, who processes numbers of tokens higher than I’ve ever considered counting; see “One Quadrillion Tokens!” (TMO #20).

There is an issue with this chart. Other commentators suggest this chart is more correct. This new chart is adding Meta’s previous lab’s time into the picture. While they did form this new lab at the time shown in the first chart, it’s misleading to show it as if Meta has never worked on AI before, even if it’s a different lab in Menlo Park also paid by Meta doing the lifting here with Muse Spark.

For the record, his article was about so much more than this point and this is really just a pit stop he makes along the way to a much grander and deeper point about what tokenomics means for the future of AI.

My lovely wife made a brilliant point here about how chatbots are naturally diffusive of conflict due to their inhuman nature. It makes it feel tedious or pointless to become angry with them.